A new web app transforms music into a wild light show using generative AI.

Unless you’ve experienced it yourself, the phenomenon of synesthesia is nearly impossible to grasp. The neurological condition in which one sense can trigger an experience through another (seeing sounds or feeling a color, for example), is relatively rare—just one in approximately 2,000 people are synesthetes. But a new app from Amsterdam-based studio Modem helps the average person understand how synesthesia works. It’s doing so by plugging a music synthesizer directly into Stable Diffusion to see what the AI comes up with.

The result of this approach doesn’t work or look anything like Lensa, DALL-E, Midjourney, or Stable Diffusion, despite using the same underlying technology. OP-Z Stable Diffusion—as the app is called—translates music into an explosion of animated images. All you need to make it work is Teenage Engineering’s OP–Z media synthesizer connected to the USB port of your computer.

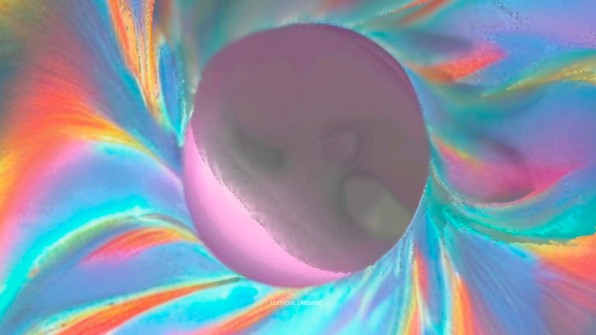

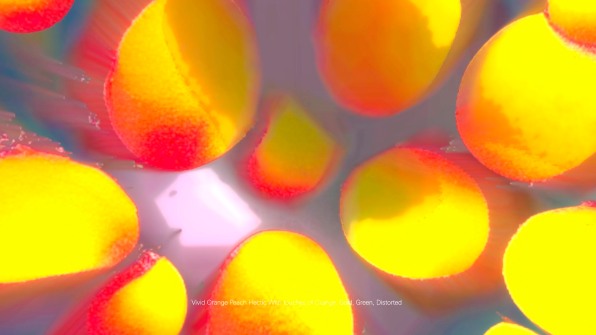

Play a song and the images smoothly transform into each other as if they were alive, following your music’s pitch, key, notes, rhythm, and beats per minute. It’s a trippy show, as if the AI has swallowed some mushrooms and a few drops of pure LSD. Wild colors flash and cycle, and objects like trees, tigers, and butterflies materialize out of nowhere, morphing into abstract images that contort and deform into the next picture. It’s a flower power journey that I haven’t seen since . . . the iTunes visualizer?

But no, this is not your parents’ rave animation. While the result may remind you of a more sophisticated version of Apple’s music screen savers, there’s more here than meets the eye.

“It’s not randomly creating images after the music,” says Bas Van De Poel, cofounder and innovation director at Modem. “Otherwise it would just be like a Winamp visualization.” If you weren’t a sentient human being in the ’90s, Van De Poel is referring to the MP3 player that showed surreal, brightly colored animations following the rhythm of any given song. Instead, he tells me, OP-Z Stable Diffusion follows the principles of synesthesia.

A TRIP INTO SOMEONE’S BRAIN

Synesthesia itself is wild. For the people who have it, it actually makes them see things that don’t really exist. Some people with synesthesia will see a color flash before their eyes when they hear specific words. Others will listen to music and experience different shapes, colors, and motion right in front of them.

Working with creative developer Bureau Cool, Van De Poel and his team used the synesthesia theories developed by medical experts and others who have documented or experienced the condition—like Dr. Richard E. Cytowic, psychologist Stephen Palmer, and composer Olivier Messiaen—to create the light show. The app transforms pitch, key, notes, rhythm, and BPM into text prompts that are fed into Stable Diffusion.

Music pitch, for example, is translated into information about value, with words like dark, mysterious, dusky, light, luminous, or vivid. Key is represented by primary colors and some associated object (a G sharp, for example, will turn into “orange sunrise”). The notes define secondary colors, and so on. The result is pretty wild when you think that you are seeing something similar to what people who have synesthesia see.

While it doesn’t work in true real time—Van De Poel says the processing power is still not there yet—it is a brilliant demonstration of the infinite potential that these generative AIs have for bringing us closer to the creative Big Bang that is around the corner.

ENDS

—

This article first appeared https://www.fastcompany.com/

Seeking to build and grow your brand using the force of consumer insight, strategic foresight, creative disruption and technology prowess? Talk to us at +971 50 6254340 or engage@groupisd.com or visit www.groupisd.com/story